Rent NVIDIA H100 SXM – Specialised for High-Performance Computing

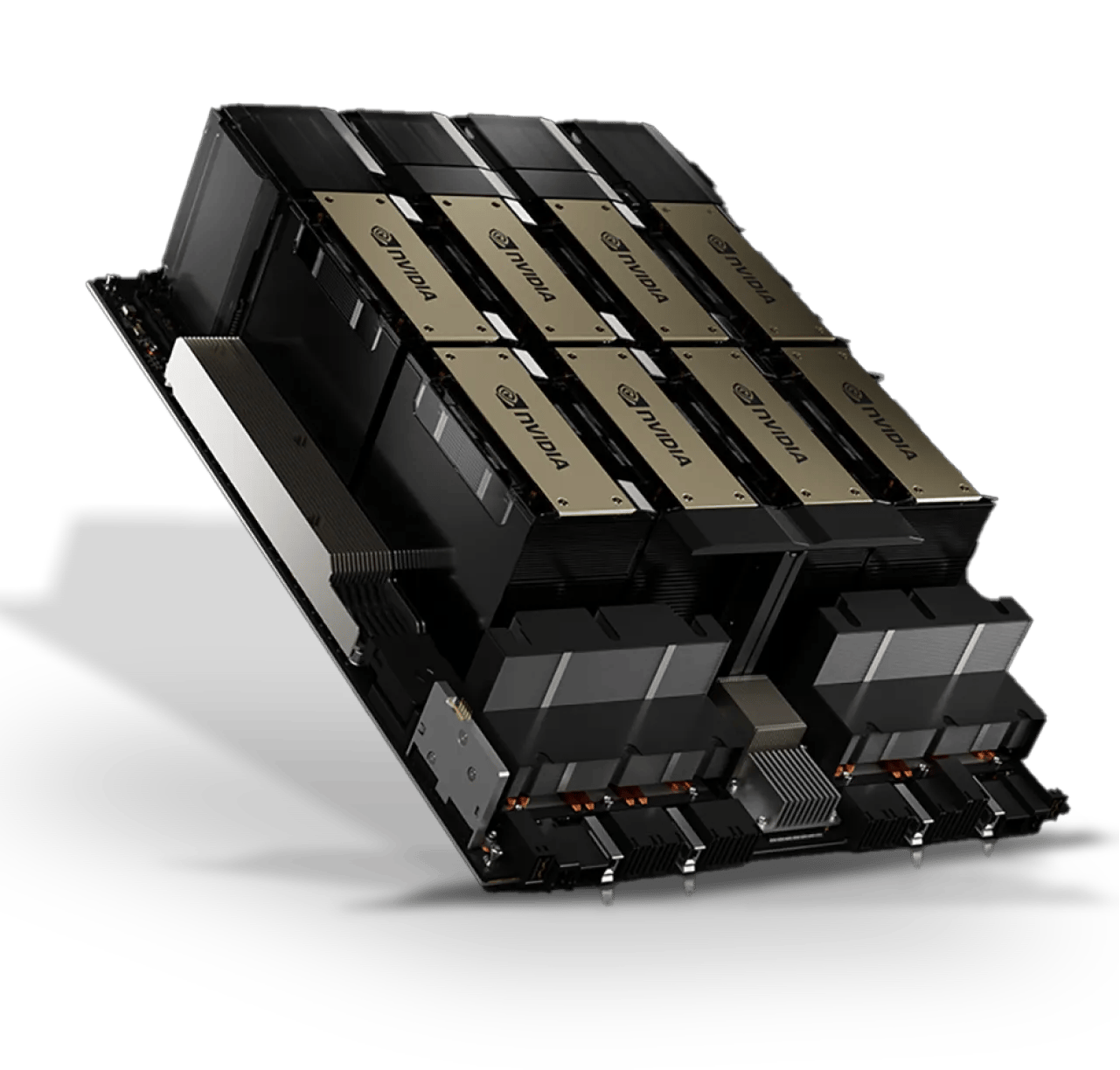

Introducing the most advanced AI cluster of its kind: Hyperstack’s NVIDIA H100 SXM is built on custom DGX reference architecture. Deploy from 8 to 16,384 cards in a single cluster - only available through Hyperstack's Supercloud reservation.

The Largest Single

Clusters of NVIDIA H100s

We ensure a surplus of power to run even the most demanding workloads. With up to 16,384 H100 80G cards operating in a single cluster, there are no break points, allowing for multitenant capabilities.

Built with NVIDIA

DGX Protocols

Built on NVIDIA DGX reference architecture, the NVIDIA H100 SXM model integrates seamlessly into the DGX ecosystem, providing a comprehensive solution for enterprise-level AI development and applications.

Unmatched Network Connectivity

While most platforms boast “fast” connectivity, typically ranging from 200gibps to 800gibps, Hyperstack's Supercloud operates at a staggering 3.2tibps (3,200gibps), offering a significant performance enhancement over traditional platforms.

NVIDIA H100 SXM

Pricing starts from $1.90/hour

Unrivalled Performance in…

AI Training & Inference

30x faster inference speed and 9x faster training speed.*

LLM Performance

30x faster processing*, enhancing language model performance.

Single Cluster Scalability

The AI Supercloud environment is the largest single cluster of H100s available outside of Public Cloud.

Connectivity

Specifically designed to allow all nodes to utilise fully their CPU, GPU and Network capabilities without limits.

Customised & Scalable Service Delivery

Bespoke Solutions for Diverse Needs

Every business is unique, and at Hyperstack, we tailor our service delivery to match your specific requirements. We personally onboard you to the Supercloud, understanding your unique challenges and objectives, ensuring a solution that aligns perfectly with your business goals.

Scalability at Your Fingertips

Flexibility and scalability are the cornerstones of our service delivery model. Built in clusters of 16,384 cards, you will not find a service outside of public cloud that can deliver the same scale and performance that we offer for AI workloads.

SuperCloud Storage

The WEKA® Data Platform offers a comprehensive and integrated data management solution tailored for Supercloud environments. This platform is designed to support dynamic data pipelines, providing high-performance data st orage and processing capabilities that cater to every phase of the data lifecycle, including data ingestion, cleansing, modelling, training validation, and inference.

Scalable Performance

The WEKA Data Platform is designed to deliver high I/O, low latency, and support for small files and mixed workloads, which is crucial for the performance-intensive nature of AI applications.

Organisations can independently and linearly scale their compute and storage in the cloud, efficiently handling tens of millions or even billions of files of all data types and sizes. Certified for NVIDIA DGX SuperPOD.

Simplified Data Management

The platform is multi-tenant, multi-workload, multi-performant, and multi-location, with a common management interface, which simplifies data management of complex AI pipelines across management interface, which simplifies data management of complex AI pipelines across various environments.

Secure & Compliant

WEKA provides encryption for data in-flight and at-rest, ensuring compliance and governance for sensitive AI data.

Energy Efficient

The platform lowers energy consumption and reduces carbon emissions by cutting data pipeline idle time, extending the usable life of hardware, and facilitating workload migration to the cloud. The WEKA Data Platform can be utilised as part of Supercloud environments or as an additional option for the standard cloud offering through Hyperstack, providing shared storage NAS and Object storage with support for snapshots and cloning.

Dedicated Onboarding & Support Team

Our Dedicated Onboarding & Support Team is your reliable partner, providing expert guidance and support to ensure a smooth onboarding experience and ongoing assistance, empowering you to achieve your goals with confidence

-

24/7 Expert Assistance

Our commitment to excellence extends to our customer support. We offer 24/7 assistance, ensuring that expert help is always available whenever you need it. Our team is equipped to handle any query or challenge you might encounter with the HGX SXM5 H100 and your Supercloud environment.

-

Personalised Support & Onboarding

At Hyperstack, we believe in providing a customer support experience that goes beyond the conventional. We offer personalised, comprehensive solutions, dedicated to onboarding the perfect environment specialised to your needs.

-

Technical Expertise and Deep Product Knowledge

Our support team possesses deep technical expertise and extensive product knowledge. This ensures that any support you receive for the NVIDIA H100 SXM and Supercloud onboarding is not just general guidance but informed, specialised assistance tailored to the nuances of this advanced technology.

Benefits of NVIDIA H100 SXM

SXM Form Factor

High-density GPU configurations, efficient cooling, and energy optimisation with the superior SXM form factor

DGX reference architecture

Designed with DGX reference architecture to meet the rigorous demands of enterprise-level AI and Machine Learning applications.

Scalable Design

Modular architecture for seamless scalability to meet evolving computational needs, built in single clusters of 5120 H100 cards.

TDP of 700 W

Designed to operate at a higher TDP compared to the PCIe version, the SXM H100 is ideal for the most intensive AI and HPC applications that demand peak computational power.

NVLink & NVSwitch

The HGX SXM5 H100 utilises NVLink and NVSwitch technologies, providing significantly higher interconnect bandwidth compared to our PCIe version.

GPU Direct Technology

Enhanced data movement and improved performance: read and write to/from GPU memory, eliminating unnecessary memory copies, decreasing CPU overheads and reducing latency.

NVIDIA H100 SXM

Up to 8 Weeks Delivery Time For Up to 16,384 NVIDIA H100 SXM Card Cluster!

Technical Specifications

Only available through Supercloud reservation

Frequently Asked Questions

We have a dedicated technical team dedicated to onboarding NVIDIA H100 SXM users, ready to help you set up a Supercloud environment to any configuration you require.

What is the difference between NVIDIA's H100 SXM and PCIe models?

Both NVIDIA's H100 SXM and PCIe models are powerful GPUs, but they differ in connectivity and performance. PCIe is flexible and comparatively lower at cost, but SXM has double the memory, faster data transfer and a higher power limit for extreme performance.

What is NVIDIA H100 SXM?

NVIDIA's H100 SXM is a strong GPU designed for data centres, optimised for demanding workloads like AI, scientific simulations and big data analytics.

How much faster is NVIDIA H100 than NVIDIA A100?

The NVIDIA H100 offers 30x faster inference speed and 9x faster training speed than the A100.

What are the key features of the H100 SXM GPU?

The key features of NVIDIA H100 SXM include

- SXM Form Factor: It is designed with high-density GPU configurations, efficient cooling, and energy optimisation, making it suitable for demanding applications.

- DGX Reference Architecture: Integrated with the DGX reference architecture, it meets the rigorous demands of enterprise-level AI and machine learning applications.

- Scalable Design: With a modular architecture, it allows for seamless scalability to meet evolving computational needs. It can be built into single clusters of more that 16K NVIDIA H100 cards.

- GPUDirect Technology: This technology enhances data movement and improves performance by enabling direct reading and writing to/from GPU memory, reducing CPU overheads and latency.

- NVLink & NVSwitch: Utilising NVLink and NVSwitch technologies, provides significantly higher interconnect bandwidth compared to PCIe versions, enhancing overall performance.

- TDP of 700W: The NVIDIA H100 SXM is designed to operate at a higher Thermal Design Power (TDP) compared to PCIe versions, making it ideal for the most intensive AI and high-performance computing (HPC) applications that demand peak computational power.

What is the TDP of NVIDIA H100 SXM?

The NVIDIA H100 SXM power is up to 700W.

What is the NVIDIA H100 SXM price?

The NVIDIA H100 SXM h100 rental price starts at $2.40 per hour on Hyperstack. For long-term use, you can reserve the NVIDIA H100 SXM starting from $1.90/hour.

Which cloud providers offer NVIDIA H100 SXM?

You can access the NVIDIA H100 SXM on-demand through Hyperstack.

What is the hourly cost of using NVIDIA H100 SXM on cloud platforms?

The hourly cost of using NVIDIA H100 SXM varies by providers. However, on our cloud platform, you can access the NVIDIA H100 SXM on-demand for $2.40/hr.

How can I rent the NVIDIA H100 SXM GPU?

You can rent the NVIDIA H100 SXM GPU by accessing our console here.

Which cloud providers offer NVIDIA H200 SXM GPUs on demand?

Our commitment to excellence extends to our customer support. We offer 24/7 assistance, ensuring that expert help is always available whenever you need it. Our team is equipped to handle any query or challenge you might encounter with the HGX SXM H100.

Can I rent an H100 SXM GPU with flexible billing options?

You can rent the NVIDIA H100 SXM GPU on Hyperstack by accessing the console here.

What are common use cases for the H100 SXM module in AI workloads?

Ideal for cutting-edge LLM training, reinforcement learning, large-scale vision models, and FP8-optimised workloads needing extremely high compute and fast interconnect.